This article is the first instalment of Aparna Ramanujam’s two-part series ‘As We May Teach‘ which evaluates the privacy concerns and cognitive learning aspects of ed-tech services.

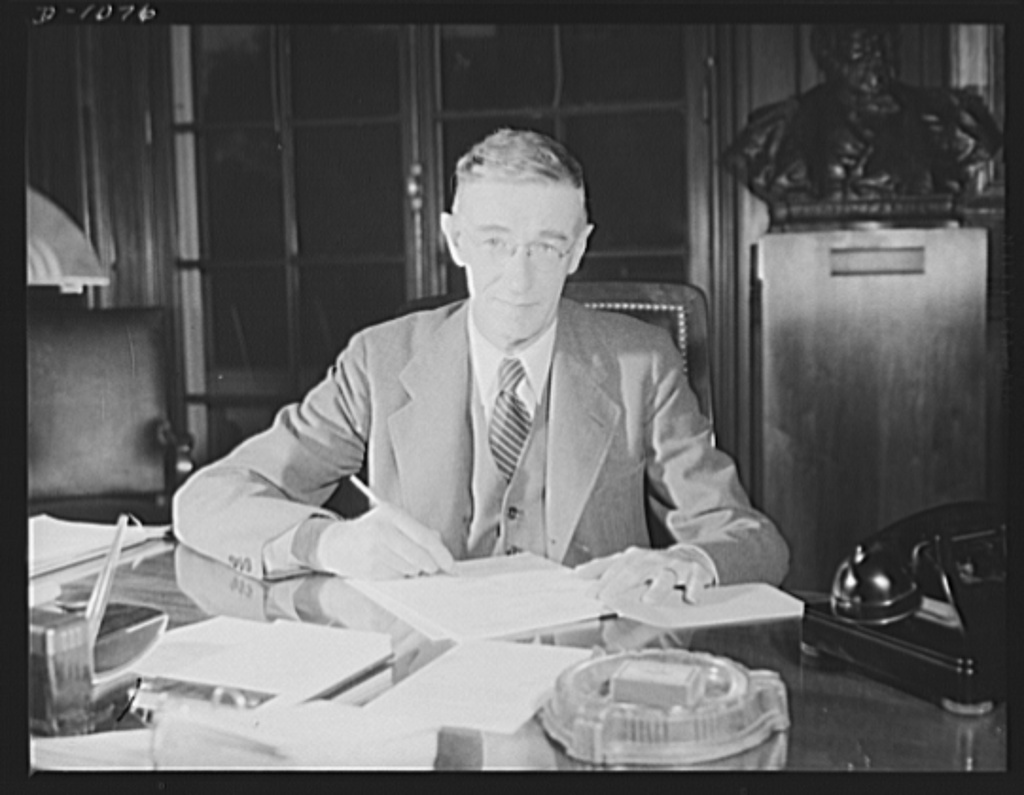

In his landmark essay titled “As We May Think”, Dr. Vannevar Bush predicted the future in 1945 when he waxed eloquent about “a device which is mechanized so that it may be consulted with exceeding speed and flexibility… and is an enlarged, intimate supplement to [man’s] memory.”

This essay inspired scientists all over the world, resulting in inventions including the Internet, the World Wide Web, and personal computers, among others. Strikingly, although Bush details the potential use of technology in multiple domains–healthcare, law, chemistry, even history–education is notably missing. Today, however, it’s a different story.

Everyone and their neighbour today are aware of the transformative power of technology in education. It has the ability to reach every student, regardless of physical barriers. It can allow every student to learn at their own pace, and ensure complete mastery of academic content. In a country like India, where social barriers like caste and gender often affect student attendance and behaviour in the classroom, technology can render those barriers non-existent by allowing each student to operate independently while still requiring some collaboration.

You May Also Like: How Well Can E-Learning Match Up To A Traditional Classroom?

Clearly, this potential for immense positive impact has captured the world’s imagination. In the UK, schools spent £900 million on educational technology products in 2017 alone. More than 4,000 ed-tech startups have been launched in India over the past five years, with a total value exceeding $2 billion USD (none except Byju’s have been profitable.) The United States of America leads the pack, with its total ed-tech spending crossing $13 billion USD, while global expenditure on ed-tech is expected to reach $342 billion USD by 2025.

The excitement is palpable, but is there a darker side to this story?

The Devil in the Details

Across the world, there is a general reluctance from governments to regulate the ed-tech space, mainly out of the fear that it will stifle innovation. This reluctance increases ten-fold when it comes to controlling the most valuable technological commodity: data.

Given that the ed-tech industry’s main goal is to personalize learning for each student, there is a need to collect as much student data as possible. Unlike in India, data collected in the US from each student includes (but is not limited to) biometric information, academic progress spanning several years, disciplinary information, web browsing history, and IP addresses. In many cases, ed-tech vendors directly approach schools to stand-in for parents and consent to provide student data, a practice that is currently permitted by many international data regulations.

It’s not hard to see how this could become dangerous-school servers house extremely sensitive student information like their home address and financial status-so one faulty move could push all of this data into the wrong hands. As recently as 2017, cybercriminals hacked into school servers in several American districts, using personal student data to threaten and extort students and their families.

“The Swedish data protection authority fined a high school under the GDPR because it was using facial recognition, arguing the students’ consent could not be freely given because the school administration has a moral authority over them.”https://t.co/D5ZiA1Z4i8 cc @elanazeide

— Frank Pasquale (@FrankPasquale) September 29, 2019

Moreover, data protection rules are often applicable only within specific geographic boundaries, which means that companies that are located or operate outside those boundaries are not required to comply with those laws – even if they collect data from its citizens. In some regions (like the EU), data protection regulations are strict enough to reduce malicious use of student data even outside its territory. However, despite India’s status as the second-most lucrative market for ed-tech, our data protection regulations are far from adequate.

Currently, the usage of personal data–or data collected from every Indian citizen–is controlled by the Information Technology Act, 2011. The Act contains no mention of age or industry-specific regulations about data processing. Even in the instances where it discusses individual data privacy, it provides an incredibly narrow definition of what constitutes “sensitive personal data”. More concerningly, it provides easy loopholes for companies to override the restrictions placed on processing, or selling, personal data.

To illustrate, the IT Act permits companies to transfer personal data to third parties if the user (that is, the student) consents to it. In this case, consent constitutes one of two possibilities: a user can either “opt-in” (i.e. tick a box that says “I allow my data to be used for analysis purposes”) or they can “opt-out” (i.e. remove a tick in a box that says “I allow my data to be used for analysis purposes”). Although both options allow users to control how their data is used, it requires a user to pay exceedingly careful attention to the terms of use before signing up for a product. In the context of ed-tech companies where the main users are children below the age of 18, using these forms of consent would mean that children will sign up for products without full knowledge of their data being used, thus placing students at great risk.

India: EdTech firm,Vedantu, experienced late September data breach, exposed names, emails, IP addresses, gender identifiers, passwords, phone #s, time zones and web activity of 680K customers. All data was encrypted. https://t.co/ehi3llZf6V #infosec

— Carol Brani (@CarolOnAdvLaw) November 4, 2019

A new legislation–the Personal Data Protection Bill, 2019–attempts to improve upon the existing Act to ensure that the data privacy of every citizen is protected. Unlike the current IT Act, the PDP Bill defines regulations with respect to collecting and processing child data. While the Bill does not explicitly specify details around the sale of child data, it does discuss the role of “guardian data fiduciaries” (entities that are involved in the collection and/or processing of child data of any kind), placing heavy restrictions on their usage of child data.

The Personal Data Protection draft bill, cleared by the Cabinet, aims to create a “strong and robust data protection framework for India” as it fixes obligation of data fiduciary and places a restriction on transfer of personal data outside India.https://t.co/whiMMmM6Wc

— The New Indian Express (@NewIndianXpress) December 11, 2019

The PDP Bill also states that the Central Government can declare itself (or any Government agency) as exempt from the Act - i.e. the Government can access anonymised sensitive personal data of any Indian citizen, in order to make evidence-based decisions about important policies. At first glance, this appears reasonable - what’s the harm in accessing de-identified data?

However, as several reports have pointed out, anonymized data isn’t as safe as it sounds - if the Government has access to a sufficiently large number of data points, they will be able to isolate individuals fairly easily (for an expert), thus rendering the purpose of anonymization moot. More alarmingly, the Government can access de-identified data without declaring a specific objective for its use. This is concerning for a multitude of reasons - if any institution (Government or otherwise) can access and isolate ten years’ worth of a growing child’s data, there’s no telling what they could use it for.

However, the PDP Bill is still under review in Parliament, which means that as of now, we remain under the governance of the IT Act.

The current lack of adequate governance means that the onus falls on companies to protect individual data privacy. Tech companies can choose to abide by larger data protection regulations such as the GDPR, and to have internal ethics committees to protect user data privacy. However, it is crucial to note that these are choices: the IT Act does not require companies to follow these regulations. So right now, this leaves us-and our children-at the mercy of every individual corporate entity that collects our data.

Given the sheer number of governance issues, it’s important to consider if ed-tech is worth all this risk. The world believes that education technology is creating an incredible positive impact, but is that what the evidence says?

Featured photograph courtesy of Taylor Vick on Unsplash

I agree - well written article! This is a very grey area especially in a developing economy like India which doesn’t have legislation on data collection and the manner it can be used.

I think there is always going to be a trade off in this segment of Ed-tech..