In a recent incident at Narsee Monjee Institute of Management Studies (NMIMS), students complained of harassment by proctors after their exams were conducted online and examined through Online Proctoring Solutions (Mettl, in this case). Their personal information—ranging from their ID Cards to examination forms—was stored on their college’s Learning Management System (LMS), shared with Mettl, and later used by the proctors to communicate with the students directly on social media platforms and messaging apps.

With schools and colleges still shut thanks to the pandemic, and classes primarily taking place online, it is only likely that LMS’ will continue to be depended on by teachers and students alike. However, instances like the NMIMS episode indicate the gravity of integrating LMS with third-party applications without understanding the latter’s terms of use and privacy policies.

But first, what are Learning Management Systems?

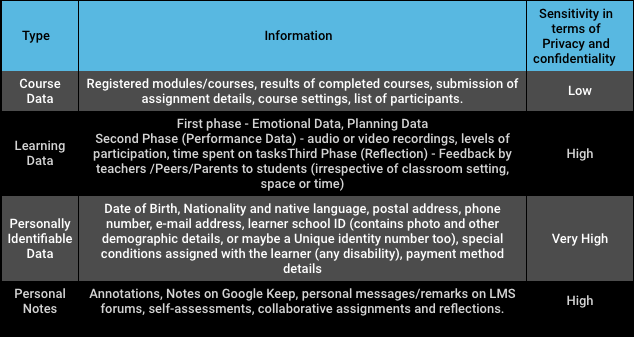

Traditionally, Learning Management Systems (LMS) facilitate e-learning by bridging the gap between teachers and students through interactive, non-linear, and dynamic sets of information. Recent advances in technology have led to the rise of the Advanced-LMS (ALMS), a “high solution software package” that allows for the storage and analysis of administrative content and knowledge resources.

Students can use ALMS’ to access course information, grades, posts, blogs, and forums. On the other hand, college administrators can ‘manage’ users, ‘track’ learning progress, ‘automate’ administration, and ‘measure’ students performance by using the Monitoring and Analysis tools for E-Learning Programs (MATEP) embedded into these platforms.

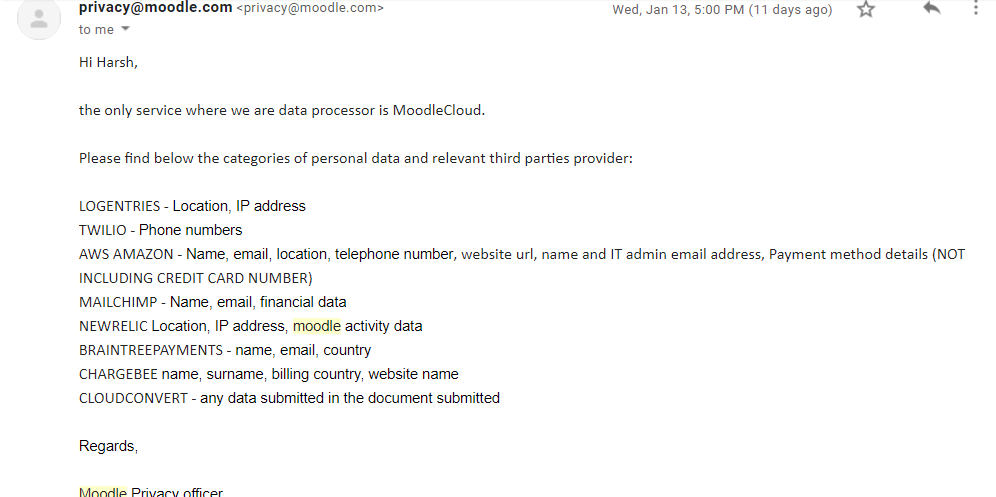

The education market offers three main LMS categories: a) Proprietary LMSs (commercial ones like Canvas, Desire2Learn), b) Open-Source LMSs (Moodle, Sakai), and c) Cloud-Based LMS (DigitalChalk, Docebo, TalentLMS.). All categories of LMS require an amalgamation of third-party services to provide customised products for their clients. For instance, in the aftermath of the above-said incident, this author contacted Moodle (a free and open-source LMS widely used in India) and asked about the categories of personal data they share with various third-party providers.

Clearly, the volume of personal information going out is vast. A few other studies on Moodle LMS further indicate the potential of data that can be stored on an LMS and shared with the third parties.

Such massive troves of data are shared continuously between educational institutions and third-party providers. Both Central and state governments are rolling out ALMSs, primarily using analytics to predict students learning outcomes and teachers performance outcomes. During COVID-19, the government has adopted a multi-pronged strategy to ensure a resilient education system. This data can be potentially arbitrarily harnessed by the government in conjunction with corporates.

What do educational authorities do with this data?

Students LMS data collected by each school can be segregated based on race, religion, class, income, grade, and learning outcomes. This can help establish a standardized curriculum and testing method, just like the US’ No Child Left Behind NCLB program. In a similar vein, the government intends to set up a National Assessment centre called PARAKH (Performance Assessment, Review, and Analysis of Knowledge for Holistic Development), in order to standardize norms for student assessment and evaluation and aid boards to shift their assessment patterns in line with the stated objectives of the National Education Policy 2020 (NEP 2020). LMS data could help achieve these outcomes.

That this information can be used for standardization is not far-fetched. Before the NEP 2020, the Gunotsav scheme launched in 2009 attempted to achieve similar levels of standardization in Gujarat. Under the scheme, said to be Prime Minister Narendra Modi’s brainchild, the state government officials receive first-hand information about each student and teacher in their state either by surprise interactions or daily monitoring done through centralized dashboards (ALMS) operated through a Command and Control Centre. Through such LMS systems, both student and teacher data can be observed and monitored by the State Education Department on a real-time basis. This seems like a good move on the surface, wherein setting up standards would incentivize teachers to perform better, allowing students to learn from able teachers.

What I found: Gujarat’s #Gunotsav shows that competitive school assessments do not transform school & education quality https://t.co/O6CPpu0u8v via @scroll_in

— anjali mody (@AnjaliMody1) April 12, 2018

The Gujarat department now plans to track 62 lakh students and nearly 40,300 schools through online platforms to assess learning outcomes. Though, learning outcome-based child tracking system was launched in 2013, the pandemic has exacerbated the government’s digital monitoring ambition. For an online monitoring dashboard (LMS), Microsoft has been roped in. The data generated under the Gunotsav 2.0 scheme (on academic performance assessment, co-curricular activities, community participation, available infrastructure, grading of teachers) is fed into the Microsoft machine learning system, and the student’s name and Unique ID is used to predict learning outcomes. This marks the beginning of a surveillance network, assemblage, or education stack.

What are the impacts of collecting all this data on students and teachers?

The overdependence on LMS creates a gradual climate of mass surveillancee. For example, Mettl comes integrated with third-party plug-ins like Microsoft Office. Moodle, uses Amazon S3 Services for hosting data, and thereby shares data with these platforms. Both cases potentially give rise to ‘surveillance capitalism’, wherein predictive profiling occurs, leading to the reorientation of students and teachers behaviour.

For example, the Gunotsav scheme that provides top grants to schools conditionally based on student and teacher performance indirectly nudges schools to deploy technology to determine the teachers “value” in a classroom. Apart from increased surveillance, this can lead to changed pedagogy, where teachers may spend more time on completing portions to be perceived as ‘efficient’, rather than critical thinking or engagement. This is antithetical to NEP’s ideals, which prioritise holistic, slow learning.

Further, ALMS’s continuous irregulated usage might lead to inevitable unintended consequences, as has already been seen in the West. In New York, a teacher named Sheri Lederman was declared incompetent to be teaching by an algorithm using statistics available on the school’s LMS. The court in 2016 found the algorithm to be ‘arbitrary and capricious’ based on the opacity of the exact parameters being used by the model.

In August 2020, thousands of students in the U.K. protested against an algorithm predicting A-Level exams results. The algorithm used three inputs from an ALMS of a particular institution: a) the historical grade distribution across schools, b) the likely rank of each student in a school-based on teacher’s evaluation (Centered Assessed Grade or CAG), and c) previous exam results for a student per subject. In this case, the algorithm was fed with a pre-biased dataset of the ALMS, leading to latent algorithmic bias.

Downgraded A’level students at schools that applied own @ofqual algorithm & @ASCL_UK guidance are in despair not just furious! Their A’levels remain downgraded, no uni places, no apprenticeship, no jobs. UTURN didn’t deliver JUSTICE for them. #CAGsappeal @GavinWilliamson @itvnews https://t.co/XyDjOS1i1B

— Catherine Buckley (@buckleybun1234) September 6, 2020

The deleterious impact on both teachers and students, the lack of a grievance redressal system, and systemic biases inherent in Indian society (along lines of caste, class and gender), coupled with no data protection legislation, create a detrimental climate for the use of ALMS. It is appropriate to mention biases in the context of technology-assisted learning for two reasons: biases influence students’ emotions and contribute to under-achievement, and when the people producing the algorithms are themselves biased the technology they develop will exacerbate surveillance, inequity, impartiality and digital divides. Such systems need a legal framework outlining accountability and grievance redressal measures.

How can educational institutions be held accountable?

Sheri Lederman’s example showcased that algorithms are proprietary (that is, licensed by developers), and so school administrations, teachers, and students are unable to appeal against the system’s conclusions. However, individual recommendations can become an everyday reality of India’s personal data ecosystem to improve grievance redressal and system accountability.

When it comes to the government, redressal systems can be addressed within the yet-to-be-implemented Draft Personal Data Protection Bill, 2019 (PDP). The PDP talks explicitly about protecting children’s data and lists much higher accountability standards for ‘guardian data fiduciaries’ (GDF), or entities which collect children’s data. Chapter IV of the Bill explicitly prohibits using children’s data for profiling, behavioral tracking, targeted advertising, and predictive analytics. To protect children against the threats of an LMS, specific measures which apply to only ‘significant data fiduciaries’ like Data Protection Impact Assessment (DPIA) should also apply to GDF. DPIA aids in identifying and assessing risks to students by outlining necessary and proportionate compliance measures.

The Data Protection Authority proposed under the PDP Bill also can institute ‘Code of Conduct’ for data fiduciaries in consultation with sectoral regulators, which in this case would be the CBSE, UGC, Moodle, among others. The DPA should also engage with National and State Education Boards to prescribe a Code of Conduct for examination protocols to adhere to when it comes to data handling, data sharing, and auditing, to be enforced when a school deploys proctoring solutions. India can take specific cues from a sectoral US Law, the Family Educational Rights and Privacy Act (FERPA), and evaluate how it can be used in India.

Advanced systems such as ALMS should have a ‘duty of care’ towards the consumers or data subjects and be liable for both criminal and civil wrongs. Though the PDP talks about offences and penalties in a criminal context, the government should engage with India’s Law Commission to re-evaluate civil wrongs in an AI context. This would demand changes to the existing consumer protection law to establish negligence underneath the concept of ‘duty of care’. The Commission should note Marguerite Gerstner’s suggestions of how an AI system should be made accountable by establishing a manufacturer’s breach of duty and place it in Indian societal context.

When it comes to corporations, a transparency report by each ‘guardian data fiduciary’ engaged in development, design and deployment of ALMS and AI systems can be uploaded to their website and supplied to the data subject. This report, among other things, should indicate the age group of children to which the LMS system is applicable, as children of different age groups should be protected differently.

Schools themselves can provide a sample notice to students of the amount of personal data collected by an ALMS. The notice should provide that ‘X University/school will only collect the data and any vendor is prohibited from storing or disclosing this information.’ Once X University/school does not need the data, there should be a default data deletion policy. If the student does not consent, students should be allowed to give the exam through an alternative method (not through online proctoring solutions), and withdraw uploading of any non-personal data on an LMS.